Unveiling the Engine: How Does Artificial Intelligence Actually Work?

Explore the intricate processes behind AI, from data digestion to intelligent decision-making.

Artificial Intelligence (AI) has rapidly moved from science fiction to a tangible part of our daily lives, powering everything from recommendation engines to complex scientific research. But what exactly happens "behind the scenes"? How does a machine learn, reason, and make decisions? This exploration delves into the core mechanisms that drive AI systems.

Core Insights: Understanding AI Fundamentals

- Data is the Fuel: AI systems learn by analyzing vast amounts of data to identify patterns, correlations, and features, which form the basis for their intelligence.

- Algorithms are the Brain: Intelligent algorithms, particularly those within machine learning, provide the instructions that allow AI to process data, learn from it, and improve performance over time without explicit programming for every task.

- Learning Mimics Cognition: AI, especially through techniques like neural networks inspired by the human brain, simulates cognitive processes like pattern recognition, problem-solving, and decision-making through iterative training and refinement.

The Building Blocks of AI: Data, Algorithms, and Learning

At its heart, AI is the science of making machines smart. It's a broad field encompassing various techniques, but the fundamental principle involves creating systems capable of performing tasks that traditionally require human intelligence. This capability is built upon several key components:

The Crucial Role of Data

AI systems are fundamentally data-driven. They require massive datasets to learn effectively. Think of data as the textbook from which the AI studies.

Data Ingestion and Preparation

The process begins with collecting relevant data, which can be structured (like spreadsheets or databases) or unstructured (like text documents, images, audio files). This raw data is often messy, containing errors, duplicates, or irrelevant information. Therefore, a critical step is data preparation (or pre-processing), which involves cleaning, formatting, and organizing the data to make it suitable for AI algorithms. The quality and quantity of this prepared data significantly impact the AI's learning ability and subsequent performance.

Intelligent Algorithms: The Processing Power

Algorithms are the sets of rules or instructions that AI systems follow to process data, learn patterns, and make decisions. They are the core logic engine of AI.

Types of Algorithms

Different AI tasks require different types of algorithms. Machine Learning (ML) algorithms allow systems to learn from data, while Deep Learning (DL), a subset of ML, uses complex structures called neural networks to learn intricate patterns from vast datasets. Other specialized algorithms exist for tasks like understanding human language (Natural Language Processing - NLP) or learning through trial and error (Reinforcement Learning).

The Learning Process: Iteration and Refinement

Unlike traditional software that follows explicit instructions, AI systems learn from experience. This learning process is typically iterative.

Training Models

During training, the prepared data is fed into the chosen algorithm. The AI model adjusts its internal parameters (like connections between 'neurons' in a neural network) to minimize the difference between its predictions and the actual outcomes in the training data. This process is repeated thousands or even millions of times, allowing the model to progressively improve its accuracy in identifying patterns or making predictions.

The AI Operational Workflow: A Step-by-Step Look

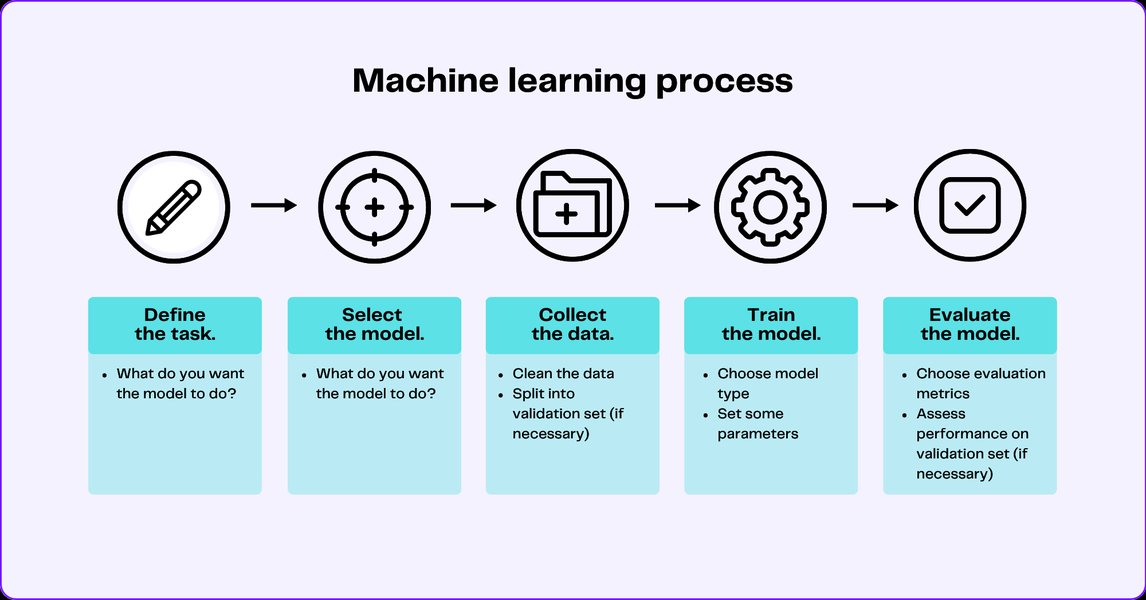

Creating and deploying an AI system involves a structured workflow, ensuring the technology is effective, reliable, and achieves its intended purpose.

1. Defining the Problem & Collecting Data

The first step is clearly defining the problem the AI aims to solve (e.g., identifying spam emails, predicting stock prices, generating images). Based on this, relevant data is collected and prepared, as discussed earlier. This stage is foundational; the right data is crucial for success.

2. Choosing the Right Tools: Algorithms and Models

Developers select the most appropriate AI techniques and algorithms based on the problem and the available data. This might involve choosing between different machine learning models (like decision trees, support vector machines, or neural networks) or selecting specific architectures for deep learning.

Data Preprocessing -> Model Training -> Model Evaluation -> Deployment" style="width:100%; height:auto; display: block; margin-left: auto; margin-right: auto;">

Data Preprocessing -> Model Training -> Model Evaluation -> Deployment" style="width:100%; height:auto; display: block; margin-left: auto; margin-right: auto;">

A typical workflow for building machine learning models involves several stages from data input to deployment.

3. Training the AI Model

This is where the learning happens. The chosen model is trained using the prepared dataset. The system iteratively adjusts its internal parameters to better capture the underlying patterns in the data. This phase often requires significant computational resources and time, especially for complex models like deep neural networks.

4. Evaluating Performance: Validation and Testing

Once the initial training is complete, the model's performance must be evaluated. This is done using a separate dataset (the validation or test set) that the model hasn't seen during training. This step is crucial to ensure the model generalizes well to new, unseen data and hasn't simply "memorized" the training examples (a problem known as overfitting). Key metrics like accuracy, precision, and recall are used to assess performance.

5. Deploying the Model

After successful evaluation, the trained AI model is deployed into a real-world application. This could be integrating a recommendation engine into a streaming service, deploying a chatbot on a website, or incorporating predictive maintenance AI into industrial machinery. In this phase, the AI performs inference – using its learned knowledge to make predictions or decisions on new, live data.

6. Monitoring and Continuous Learning

AI systems are often not static. After deployment, their performance is continuously monitored. Many systems are designed to keep learning from new data they encounter, allowing them to adapt to changing patterns and improve over time. This might involve periodic retraining with updated datasets or employing techniques like online learning.

Mapping the AI Landscape

Understanding how AI works involves recognizing the different interconnected concepts and technologies. The following mindmap provides a visual overview of the key elements involved in the AI ecosystem.

Simulating Human Intelligence"] id1["Core Components"] id1a["Data

(Fuel for Learning)"] id1a1["Collection"] id1a2["Preparation

(Cleaning, Formatting)"] id1b["Algorithms

(Instructions & Logic)"] id1b1["Pattern Recognition"] id1b2["Decision Making"] id1c["Learning

(Training & Improvement)"] id1c1["Iterative Process"] id1c2["Parameter Adjustment"] id2["Key Technologies & Subfields"] id2a["Machine Learning (ML)"] id2a1["Supervised Learning"] id2a2["Unsupervised Learning"] id2a3["Reinforcement Learning"] id2b["Deep Learning (DL)"] id2b1["Artificial Neural Networks (ANNs)"] id2b2["Convolutional NNs (CNNs)"] id2b3["Recurrent NNs (RNNs)"] id2c["Natural Language Processing (NLP)"] id2c1["Text Analysis"] id2c2["Language Generation"] id2c3["Translation"] id2d["Generative AI (GenAI)"] id2d1["Content Creation (Text, Images, Audio)"] id2d2["Retrieval-Augmented Generation (RAG)"] id3["Operational Workflow"] id3a["Problem Definition"] id3b["Data Collection & Prep"] id3c["Model Selection & Training"] id3d["Evaluation & Testing"] id3e["Deployment (Inference)"] id3f["Monitoring & Continuous Learning"] id4["Applications"] id4a["Recommendation Systems"] id4b["Chatbots & Virtual Assistants"] id4c["Image Recognition"] id4d["Autonomous Systems"] id4e["Medical Diagnosis"] id4f["Financial Modeling"]

This mindmap illustrates how fundamental components like data and algorithms feed into various AI technologies and subfields, following a structured workflow to create applications that impact numerous domains.

Key Technologies Powering Modern AI

Several specific technologies and subfields within AI are particularly important in understanding its current capabilities.

Machine Learning (ML)

As mentioned, ML is a core subset of AI focused on building systems that can learn from and make decisions based on data. Instead of being explicitly programmed for a task, ML algorithms use data to train a model that can then perform the task. Examples include spam filters learning to identify junk email or recommendation systems learning user preferences.

Deep Learning (DL) and Neural Networks

Deep Learning is a specialized type of ML that uses artificial neural networks (ANNs) with multiple layers (hence "deep"). These networks are inspired by the structure and function of the human brain, with interconnected nodes or 'neurons' processing information in layers.

Artificial Neural Networks process information through interconnected layers, mimicking biological brain structures.

DL excels at finding complex patterns in large datasets, making it highly effective for tasks like image recognition (identifying objects in photos), natural language processing (understanding the meaning of text), and playing complex games. While powerful, the exact decision-making process within deep neural networks can sometimes be complex and difficult for humans to interpret fully, leading to the concept of the "black box" in AI.

Natural Language Processing (NLP)

NLP focuses on enabling computers to understand, interpret, and generate human language in a way that is meaningful. This involves tasks like language translation, sentiment analysis (determining the emotion in text), chatbots, and voice assistants like Siri or Alexa.

Generative AI (GenAI)

A more recent advancement, Generative AI refers to deep learning models capable of generating new, original content, such as text, images, music, or code, based on the patterns learned from their training data. Large Language Models (LLMs) like GPT are prominent examples. Techniques like Retrieval-Augmented Generation (RAG) enhance GenAI by allowing models to access and incorporate external, up-to-date information during content generation, improving accuracy and relevance.

Comparing AI Subfields: A Radar Chart View

Different AI subfields possess distinct characteristics. The radar chart below provides an opinionated comparison based on typical implementations across several dimensions: Data Dependency (how much data is usually needed), Model Complexity, Interpretability (ease of understanding *why* a decision was made), Task Specificity (how specialized the application usually is), and Learning Speed (relative training time).

This chart highlights trade-offs: for example, Deep Learning and Generative AI offer high capability (handling complexity) but often require vast data, are harder to interpret, and take longer to train compared to more traditional ML approaches.

How AI Makes Decisions

Fundamentally, AI makes decisions or predictions by applying the patterns it learned during training to new data. When presented with a new input (e.g., a customer query, a medical image, market data), the AI system processes it through its learned model.

Pattern Matching and Prediction

The model identifies features in the new data that correspond to patterns learned from the training data. Based on these matches and the statistical relationships discovered during training, the AI generates an output. This could be a classification (e.g., "spam" or "not spam"), a prediction (e.g., expected rainfall tomorrow), a recommendation (e.g., movies you might like), or generated content (e.g., text summarizing an article).

The ability of AI to sift through enormous datasets and detect subtle, complex correlations often allows it to make predictions or decisions with speed and accuracy that can surpass human capabilities in specific, well-defined tasks.

Illustrative Video: AI Basics Explained

For a visual and auditory explanation of the fundamental concepts behind how AI works, the following video provides a helpful overview, touching upon core ideas like learning from data and the role of algorithms.

This video covers the introductory concepts, explaining how AI systems are trained and how they apply their learned knowledge, making the abstract ideas more concrete.

Summary Table: Key AI Concepts

This table summarizes some of the core concepts and technologies discussed:

| Concept/Technology | Description | Primary Function | Example Applications |

|---|---|---|---|

| Artificial Intelligence (AI) | Broad field focused on creating machines capable of intelligent behavior. | Simulate human cognitive functions like learning, reasoning, problem-solving. | All subsequent examples fall under AI. |

| Machine Learning (ML) | Subset of AI where systems learn from data without explicit programming. | Identify patterns, make predictions based on data. | Spam filters, recommendation engines, predictive maintenance. |

| Deep Learning (DL) | Subset of ML using multi-layered neural networks. | Learn complex patterns from large datasets. | Image recognition, natural language understanding, autonomous driving. |

| Artificial Neural Networks (ANNs) | Computational models inspired by the human brain's structure. | Process information through interconnected nodes (neurons) in layers. | Core component of Deep Learning models. |

| Natural Language Processing (NLP) | Field enabling computers to understand and process human language. | Interpret, analyze, and generate text or speech. | Chatbots, language translation, sentiment analysis, voice assistants. |

| Generative AI (GenAI) | AI capable of creating novel content (text, images, etc.). | Generate original outputs based on learned patterns. | Content creation (writing, art), data augmentation, drug discovery. |

| Data | Information used to train and operate AI systems. | Provide the examples and experience for AI learning. | Images, text, numbers, sensor readings used in AI models. |

| Algorithm | A set of rules or instructions followed by the AI system. | Define how data is processed and learned from. | Decision trees, regression algorithms, clustering algorithms, backpropagation (for NNs). |

Frequently Asked Questions (FAQ)

What is the difference between AI and Machine Learning?

What are Neural Networks and why are they important?

Does AI 'think' like humans?

Why is data so important for AI?

Can we always understand how an AI reaches a decision?

Recommended Reading

- What are the main types of machine learning and how do they differ?

- How do deep learning neural networks actually recognize objects in images?

- Explore the mechanisms behind generative AI and its content creation capabilities.

- What ethical challenges arise from the way AI learns and makes decisions?

References

Last updated May 4, 2025