Understanding Regularization in Small Datasets

Navigating the Balance Between Overfitting and Underfitting

Key Highlights

- Impact of Strong Regularization: Over-penalizing can hinder the learning of important patterns.

- Balancing Act: It is crucial to find the right weight to avoid underfitting while still preventing overfitting.

- Alternatives and Best Practices: Techniques such as milder regularization, feature selection, and data augmentation are effective strategies.

Introduction to Regularization and Small Datasets

In the realm of machine learning, regularization is a foundational technique employed to prevent overfitting and enhance the generalization capabilities of models. Its primary function is to add a penalty term to the loss function which discourages models from fitting overly complex or noisy patterns that emerge in training data. However, when the dataset is small, the application of strong regularization can sometimes impede the model’s capacity to learn the underlying data patterns effectively.

Overfitting is the scenario where a model learns not just the underlying trends but also the noise present in the training data. Regularization combats such overfitting by imposing constraints on the model parameters, ensuring that the model does not become overly tuned to the training data. However, in situations with limited data points, if the regularization force is too strong, it may lead the model into a state of underfitting—where the model is too simplistic to capture important data relationships.

Understanding the Role of Regularization

Concept and Techniques

Regularization techniques generally fall into two categories: L1 regularization (Lasso) and L2 regularization (Ridge). Both methods function by adding a secondary term to the loss function:

L1 Regularization (Lasso)

L1 regularization penalizes the absolute value of the model's weights. This penalty can force certain weights to become exactly zero, thereby effectively performing feature selection. With small datasets, L1 regularization is often advantageous because it removes less important features, yielding a model that is less prone to capturing noise.

L2 Regularization (Ridge)

L2 regularization imposes a penalty on the square of the weights. This tends to shrink the weights while ensuring that none are completely eliminated. It leads to smoother solutions and ensures a more distributed impact among features, but in small datasets, it may sometimes overly shrink weights and prevent learning significant relationships.

The purpose of both techniques is to reduce model complexity in a controlled manner; however, the degree to which they accomplish this goal is highly sensitive to the strength of the regularization parameter used in training.

Challenges of Strong Regularization in Small Datasets

Potential Downsides

When applying regularization to small datasets, it’s essential to recognize that the scarcity of data can exaggerate the downsides of overly strong penalization:

Underfitting

In the context of small datasets, strong regularization can prevent a model from fitting the data well. With too heavy a penalty factor, the model may become so constrained that it fails to learn even the basic trends of the dataset. This state of underfitting is characterized by poor performance on both training and unseen data.

Over-regularization

While regularization is designed to prevent overfitting, its excessive application on limited data can lead to what is known as over-regularization. In such cases, the model loses its ability to capture even the most essential patterns because every intricate nuance is suppressed by the strong constraints. This creates a model that is overly simplistic in mapping real-world relationships.

Loss of Informative Features

Small datasets inherently offer only a minimal amount of information, meaning that every data point is precious. Strong regularization may inadvertently penalize truly informative features along with noise, causing the model to miss out on insights that could have contributed significantly to its predictive performance.

Striking the Right Balance

Tuning Parameters and Techniques

The principal challenge when working with small datasets is to strike an appropriate balance between preventing overfitting and ensuring that the model has enough flexibility to learn significant patterns. This involves:

- Tuning the Regularization Parameter: Use cross-validation techniques to determine the optimal regularization strength. In small datasets, even a slight over-penalization can have a big impact, so parameter tuning is critical.

- Selective Application of L1 versus L2: Depending on the data, L1 might offer advantages like sparse modeling, while L2 could provide smoother results. The specific needs of the dataset should guide which type, or combination, to use.

- Utilizing Alternative Techniques: Techniques such as early stopping (to cease training once overfitting starts to occur) and data augmentation (which artificially increases the dataset size) should be considered as complementary to regularization.

By carefully tuning these parameters and strategies, practitioners can mitigate the risk of underfitting while still guarding against the potential pitfalls of overfitting.

Comparative Overview of Regularization Methods

| Aspect | L1 Regularization (Lasso) | L2 Regularization (Ridge) |

|---|---|---|

| Mechanism | Adds penalty equal to the absolute value of coefficients. | Adds penalty equal to the squared value of coefficients. |

| Effect on Weights | Can zero out some coefficients (feature selection). | Shrinks all coefficients uniformly but typically does not zero them. |

| Sensitivity in Small Datasets | Might discard vital data if over-applied, making the model too sparse. | May overly constrain the model, causing underfitting if penalties are too high. |

| When to Use | Effective when the dataset has many features and some may be irrelevant. | More suitable for situations requiring a balance across many correlated features. |

Best Practices for Applying Regularization in Small Datasets

Strategies and Considerations

Dealing with small datasets requires a thoughtful combination of techniques. Below we outline a series of best practices that can help in fine-tuning the regularization process:

1. Optimize via Cross-Validation

Cross-validation remains one of the most reliable methods for assessing model performance while tuning hyperparameters, including the regularization factor. K-fold cross-validation or leave-one-out strategies are particularly useful in small datasets as they make maximal use of limited data.

2. Consider Data Augmentation Techniques

When facing data scarcity, data augmentation can be an invaluable tool. By artificially increasing the training data—through methods specific to the domain, such as image rotations for vision tasks or synthetic data generation for tabular data—the model gains access to a broader sample, thus reducing the burden on regularization to generalize from very limited examples.

3. Feature Selection and Dimensionality Reduction

Small datasets often contain noise and irrelevant features that can hurt performance. Incorporating feature selection techniques such as Recursive Feature Elimination (RFE) or dimensionality reduction methods like Principal Component Analysis (PCA) can help ensure that the model focuses on the most informative inputs, thereby complementing regularization.

4. Combine with Other Techniques

Alongside conventional regularization, techniques like early stopping—which halts training as soon as validation performance begins to degrade—can play an important role in maintaining a balance between learning and generalization.

Moreover, taking advantage of transfer learning, where models pre-trained on larger datasets are fine-tuned on the smaller target set, can also offload some of the burden of learning entirely from scratch, which indirectly controls the need for heavy regularization.

Practical Insights and Implementation

Application Examples and Code

Practitioners often experiment with regularization strength by considering incremental adjustments. For instance, using grid search or Bayesian optimization to sample different regularization coefficients can help determine the optimal level for a given small dataset.

Below is an example snippet of Python code utilizing Scikit-Learn that demonstrates how to tune the regularization parameter using cross-validation:

# Import libraries

from sklearn.linear_model import Ridge

from sklearn.model_selection import GridSearchCV

# Define a small sample dataset (features X and target y)

# Note: In an actual scenario, this dataset should be empirically small.

X = [[1, 2], [2, 3], [3, 4], [4, 5]]

y = [2, 3, 4, 5]

# Define the model and parameter grid

model = Ridge()

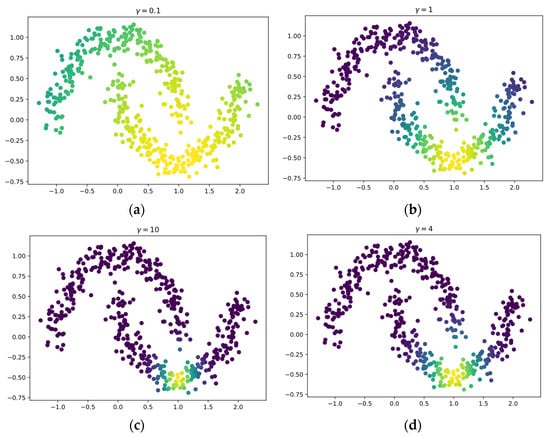

param_grid = { 'alpha': [0.1, 1.0, 10.0, 100.0] }

# Use grid search with cross-validation

grid_search = GridSearchCV(model, param_grid, cv=3)

grid_search.fit(X, y)

# Output the best parameter set

print("Best alpha:", grid_search.best_params_['alpha'])

The objective here is not just to find a configuration that avoids overfitting, but also to ensure that the model is flexible enough to capture all essential patterns without being overly constrained by the regularization term.

Integrating Regularization with Broader Machine Learning Workflow

Holistic Approach to Model Training

It is important to view the role of regularization as part of a broader model training and refinement process. Especially when dealing with small datasets, combining several strategies can be the key to effective machine learning model development:

- Preprocessing and Cleaning: Ensure that the data is as noise-free as possible before any learning occurs. Effective preprocessing minimizes the need for overly aggressive regularization.

- Regularization and Hyperparameter Tuning: Deploy a systematic exploration of parameter values using robust evaluation techniques like cross-validation.

- Complementary Methods: Utilize data augmentation, early stopping, and transfer learning to enhance the dataset’s effective size and variability.

- Evaluation and Iteration: Continuously evaluate the model on validation data and remain ready to iterate by adjusting the regularization strength as more data or new insights become available.

By considering regularization as an integrated component of the overall machine learning pipeline, practitioners can leverage its advantages without sacrificing the model's capacity to learn from small datasets.

References

- Why Regularization Matters in Machine Learning and How to Apply It - Medium

-

Dealing with Very Small Datasets - Kaggle

- What to Do with "Small" Data? - Medium

- Regularization in Machine Learning - GeeksforGeeks

- Machine Learning Models for Regression on Small Datasets - StatsExchange

Recommended Queries for Further Exploration

Last updated March 5, 2025