Strategic Imperatives: Enhancing Clarity and Execution for Key Project Milestones

A detailed refinement of your project's functional walkthroughs, technical documentation, and automation strategies for optimal outcomes.

To ensure your project progresses efficiently and effectively, it's crucial to have a clear, well-defined understanding of each component. Below is an enhanced breakdown of the discussed points, incorporating best practices and strategic considerations to bolster their impact and execution.

Key Strategic Enhancements

- Comprehensive Functional Review: Implementing structured walkthroughs to ensure all stakeholders align on software functionality and user experience, catching potential issues early.

- Robust Technical Blueprints: Developing detailed and accessible technical documentation for the "EA Feed" to facilitate understanding, maintenance, and future development.

- Advanced Automation Framework: Establishing a sophisticated functional automation strategy that covers deployment, data management, validation, and dependency mocking for thorough and efficient testing.

1. Functional Walkthrough – Facilitated by Nikhil

Ensuring Shared Understanding and Early Issue Detection

The functional walkthrough, led by Nikhil, is a critical process for collaboratively reviewing the application's features, user workflows, and overall behavior. This isn't just a presentation, but an interactive session designed to ensure that the software meets its intended functional requirements and provides a positive user experience.

A team collaboratively planning a process, akin to a functional walkthrough session.

Key Objectives and Enhancements:

- Structured Review Process: Adopt a systematic approach, guiding stakeholders (developers, testers, business analysts, product owners) step-by-step through each key function. This helps in meticulously evaluating the software against predefined requirements and user stories.

- Cognitive Walkthrough Techniques: Incorporate elements of cognitive walkthroughs, where participants simulate being a new user. This helps assess the ease of learning and use, identifying potential usability hurdles or confusing interactions.

- Early Identification of Discrepancies: The primary goal is to uncover misunderstandings, design flaws, missing functionalities, or deviations from requirements early in the development lifecycle, when they are less costly to rectify.

- Interactive Feedback and Documentation: Encourage active participation and gather detailed feedback. Document all findings, action items, and decisions in a shared repository. Visual aids like mockups, prototypes, or flowcharts can enhance clarity.

- Scope Confirmation: Validate that the scope of the features being reviewed aligns with stakeholder expectations and project goals.

By enhancing this walkthrough, Nikhil can foster better cross-team collaboration, reduce the risk of late-stage requirement changes, and ensure the final product is well-aligned with user needs and business objectives.

2. Technical Documentation of EA Feed – Spearheaded by Sundar

Building a Foundation of Clarity and Maintainability

Sundar's responsibility for the technical documentation of the "EA Feed" is pivotal. "EA Feed" could refer to an Enterprise Architecture (EA) data feed, detailing how information flows between various enterprise systems, or it might pertain to Front-End Engineering Design (FEED) documentation, which outlines the preliminary design and specifications of a system or project before detailed design and construction.

Regardless of the precise interpretation, the goal is to create a comprehensive, clear, and accessible technical blueprint. This documentation serves as the definitive source of truth for the system's architecture, components, data structures, integration points, and operational procedures.

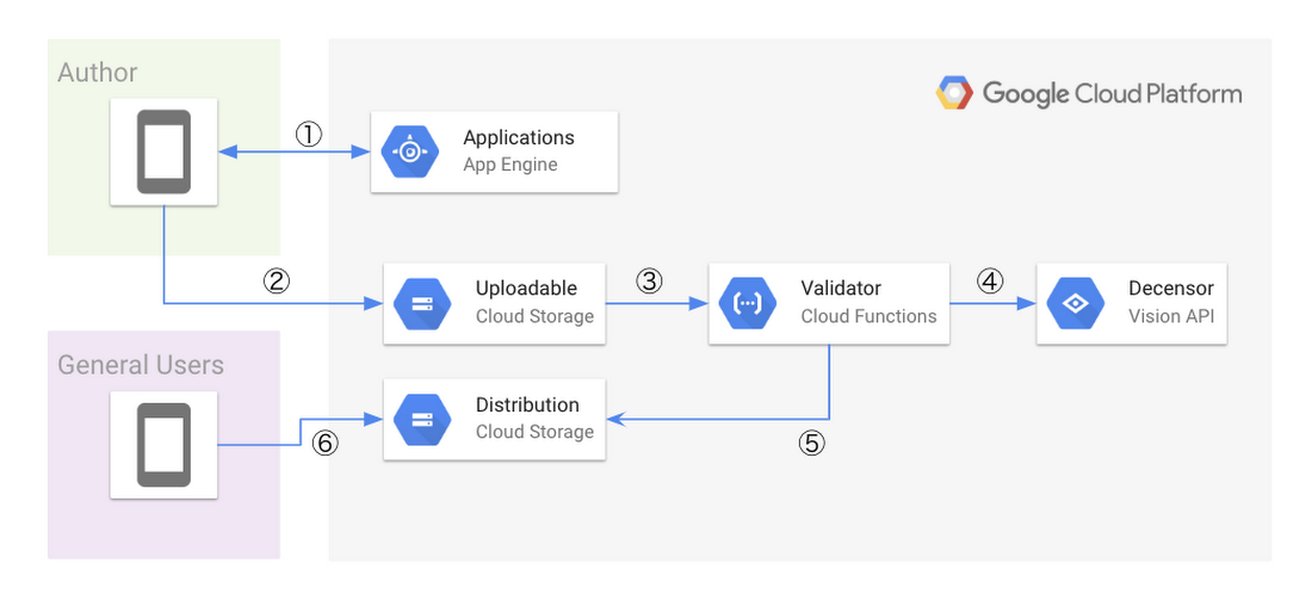

Example of a system architecture diagram, crucial for technical documentation.

Essential Components and Enhancements:

- Architectural Overview: Detailed diagrams and descriptions of the system's architecture, including all components, modules, and their interactions.

- Data Model and Flow: Comprehensive documentation of data structures, schemas, data dictionaries, and how data flows through the feed. This is crucial for understanding data lineage and impact analysis.

- API Specifications: If APIs are involved, detailed specifications (e.g., OpenAPI/Swagger) for each endpoint, including request/response formats, authentication methods, and rate limits.

- Integration Points: Clear descriptions of how the EA Feed integrates with other systems, including protocols, data formats, and dependencies.

- Deployment and Operational Guidelines: Instructions for deploying, configuring, monitoring, and troubleshooting the feed. This should include dependencies, environmental requirements, and common issues.

- Security Considerations: Documentation of security measures, access controls, data encryption, and compliance aspects.

- Version Control and Updates: Establish a process for maintaining and versioning the documentation as the system evolves. Ensure it's stored in an accessible central repository.

- Clarity and Consistency: The documentation must be written clearly, be consistent in terminology, and be easy for various stakeholders (developers, operations, new team members) to understand.

Thorough documentation by Sundar will reduce ambiguity, streamline onboarding for new team members, support maintenance and troubleshooting efforts, and provide a solid foundation for future enhancements or integrations.

3. Functional Automation Strategy – Orchestrated by Pallavi

Driving Efficiency and Reliability in Testing

Pallavi's role in defining and implementing the functional automation strategy is key to ensuring software quality and accelerating delivery. This involves creating automated scripts and processes that validate the application's functionality against its requirements. The outlined capabilities form a solid base for a comprehensive automation suite.

Core Automation Capabilities:

-

Automated Deployment of Feed Image:

The automation script must be capable of retrieving the specified feed image (e.g., a Docker container image) and deploying it to a designated test environment, referred to as a "pod" (commonly used in Kubernetes). This deployment should include the application of specific test parameters or configurations to ensure the environment mirrors testing needs. Enhancement: Implement robust error handling for deployment failures (e.g., image not found, pod creation errors) and integrate with orchestration tools for dynamic environment management and teardown.

-

Test Data Management with GCP Bucket:

The automation framework needs to manage test data effectively. This includes the ability to push test data, specifically in XML format, to a Google Cloud Platform (GCP) storage bucket. This centralized storage facilitates scalable and repeatable testing. Enhancement: Incorporate mechanisms for test data generation, versioning, and cleanup. Ensure secure handling of sensitive data, potentially using GCP's security features like encryption and IAM roles.

-

Database-Level Functional Validation:

In scenarios where APIs are not available or suitable for certain validations, the automation scripts must be able to interact directly with the database. This involves executing queries to verify data integrity, data transformations, and the correctness of business logic implemented at the data layer. Enhancement: Develop reusable database validation modules or stored procedures. Ensure that tests clean up any data they create or modify to maintain a consistent database state for subsequent tests.

-

Service Virtualization with WireMock for DMP/DQ Checks:

To enable isolated and reliable testing, especially for interactions with external systems like Data Management Platforms (DMP) or Data Quality (DQ) services, WireMock (or a similar service virtualization tool) must be implemented. This allows the simulation of responses from these external dependencies, ensuring tests can run even if those services are unavailable or unstable. Enhancement: Create a library of mock responses for various scenarios, including success cases, error conditions, and edge cases. Ensure easy configuration and switching between mock services and real services.

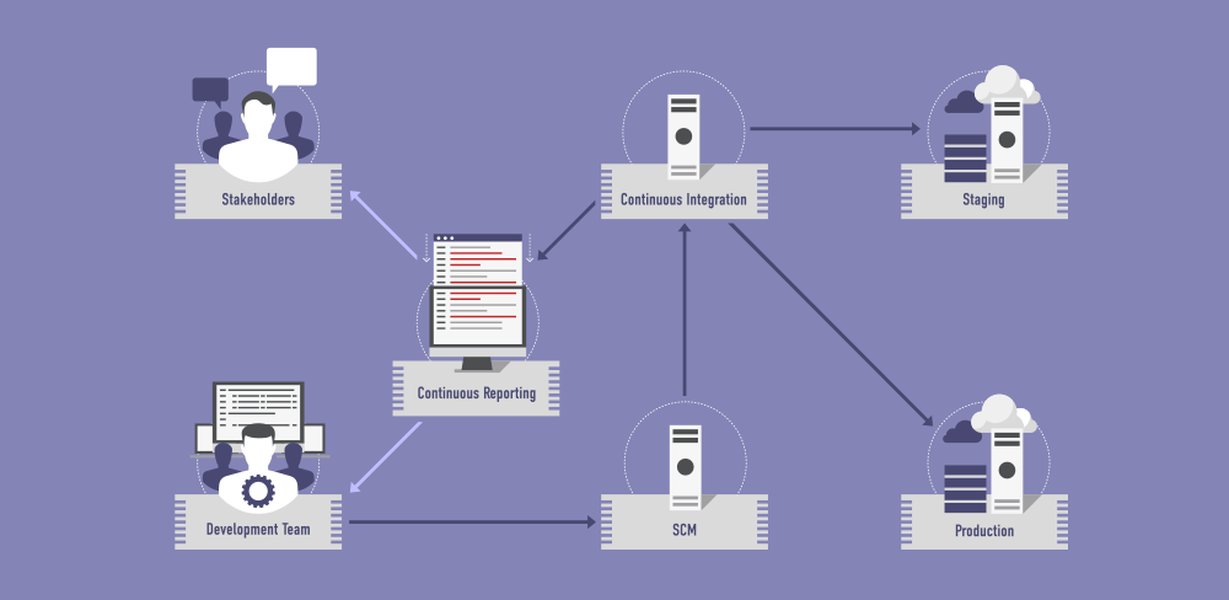

Conceptual diagram of a software test automation framework.

Broader Strategic Automation Considerations:

- Test Case Design and Reusability: Develop well-defined, modular, and reusable test cases that are easy to maintain.

- Choice of Automation Framework and Tools: Select appropriate automation tools and frameworks (e.g., Selenium, Cypress, Playwright, RestAssured) that fit the application's technology stack and testing requirements.

- Integration with CI/CD Pipeline: Seamlessly integrate automated tests into the Continuous Integration/Continuous Deployment (CI/CD) pipeline to enable automated regression testing with every build.

- Comprehensive Reporting and Analytics: Implement robust reporting mechanisms that provide clear insights into test execution status, pass/fail rates, code coverage, and identified defects.

- Regression Testing Strategy: Define a clear strategy for regression testing to ensure that new changes do not adversely affect existing functionalities.

- Scalability and Parallel Execution: Design the automation suite to be scalable and support parallel test execution to reduce overall testing time.

- Maintainability: Emphasize coding standards, comments, and documentation for automation scripts to ensure they are maintainable by the team.

Visualizing Key Process Attributes

The following radar chart provides a comparative visualization of the desired maturity levels across key attributes for the functional walkthrough, technical documentation, and functional automation processes. Ideal scores (closer to the outer edge) represent high effectiveness and maturity in each area. This helps in identifying areas that might need more focus to achieve a balanced and robust project execution strategy.

Interconnected Project Components Mindmap

This mindmap illustrates how the functional walkthrough, technical documentation, and functional automation are interconnected and contribute to the overall project success. Each main branch represents one of the core activities, with sub-branches detailing key objectives, tasks, or considerations for that activity.

(Led by Nikhil)"] id1a["Objective: Shared Understanding"] id1b["Process: Structured Review"] id1c["Key Outcome: Early Issue Detection"] id1d["Technique: Cognitive Walkthroughs"] id1e["Output: Documented Feedback"] id2["Technical Documentation

(EA Feed - Led by Sundar)"] id2a["Objective: Clarity & Maintainability"] id2b["Content: Architecture, Data Flow, APIs"] id2c["Key Outcome: Single Source of Truth"] id2d["Audience: Devs, Ops, New Hires"] id2e["Process: Version Control & Updates"] id3["Functional Automation

(Led by Pallavi)"] id3a["Objective: Efficient & Reliable Testing"] id3b["Task: Pull Feed Image & Deploy to Pod"] id3c["Task: Push XML Data to GCP Bucket"] id3d["Task: DB Validation (No API)"] id3e["Task: WireMock for DMP/DQ"] id3f["Strategy: CI/CD Integration"] id3g["Strategy: Regression Testing"] id3h["Output: Test Reports & Analytics"]

Summary of Responsibilities and Key Focus Areas

The following table summarizes the core responsibilities and primary focus areas for Nikhil, Sundar, and Pallavi, ensuring alignment and clarity on their respective contributions to the project's success.

| Team Lead | Area of Responsibility | Primary Objective | Key Deliverables/Activities |

|---|---|---|---|

| Nikhil | Functional Walkthrough | Ensure shared understanding of functionality and identify issues early. | Conducting structured reviews, facilitating stakeholder discussions, documenting feedback and action items. |

| Sundar | Technical Documentation of EA Feed | Create a comprehensive and clear technical blueprint for the EA Feed. | Detailing architecture, data models, APIs, integration points, operational guidelines, and ensuring documentation is versioned and accessible. |

| Pallavi | Functional Automation | Implement an efficient and reliable automated testing strategy. | Developing scripts for image deployment, GCP data handling, database validation, WireMock setup, CI/CD integration, and reporting. |

Best Practices in Functional Test Automation Video

To further support Pallavi's efforts in functional automation, the following video provides valuable insights into best practices. It covers essential principles and techniques that can help in building a robust and effective test automation framework, aligning well with the goals of efficiency, reliability, and comprehensive test coverage discussed.

Frequently Asked Questions (FAQ)

Recommended Further Exploration

- What are advanced techniques for conducting effective cognitive walkthroughs in agile development?

- How can we establish a living documentation strategy for evolving Enterprise Architecture feeds?

- What are the best practices for integrating automated functional tests with complex CI/CD pipelines involving containerized microservices?

- How can AI-driven tools enhance functional test automation coverage and maintenance?

References

Last updated May 20, 2025